This article contains information about AI tools and services. toolsstackai.com may receive compensation when you click on links to products or services mentioned in this content.

TL;DR: Nvidia has launched its NIM (Nvidia Inference Microservices) API, enabling developers to deploy optimized AI models on their own infrastructure with containerized solutions. The platform includes pre-built containers for popular models and enterprise features, positioning Nvidia to compete directly with cloud-based AI API providers.

Nvidia NIM API Brings Enterprise AI Deployment In-House

Nvidia has unveiled its Nvidia NIM API platform, marking a significant shift in how organizations can deploy artificial intelligence models. The service allows companies to run containerized AI workloads on their own hardware rather than relying exclusively on cloud providers.

This launch represents Nvidia’s strategic move beyond hardware manufacturing into the AI services market. Organizations can now leverage their existing GPU investments while maintaining complete control over their data and infrastructure.

The timing coincides with growing enterprise demand for on-premises AI solutions. Many companies face regulatory requirements or security concerns that make cloud-based AI deployment challenging.

Pre-Built Containers for Popular AI Models

NIM arrives with ready-to-deploy containers for widely-used AI models including Llama, Mistral, and Stable Diffusion. These containers come pre-optimized for Nvidia GPU architectures, eliminating weeks of configuration work for development teams.

The platform automatically handles GPU optimization based on available hardware. Developers can deploy the same container across different GPU configurations without manual tuning or code modifications.

Each container includes model-specific optimizations that maximize throughput and minimize latency. This approach ensures consistent performance whether running inference on a single GPU or distributed across multiple nodes.

Furthermore, the containerized approach simplifies version management and rollback procedures. Teams can test new model versions in isolated environments before promoting them to production workloads.

Enterprise-Grade Features for Production Workloads

The Nvidia NIM API includes comprehensive monitoring and observability tools designed for production environments. These features provide real-time insights into model performance, resource utilization, and inference latency across deployments.

Model versioning capabilities allow organizations to manage multiple model versions simultaneously. Teams can gradually shift traffic between versions or run A/B tests to evaluate model improvements before full deployment.

Scaling features enable automatic resource allocation based on inference demand. The system can spin up additional containers during peak usage periods and scale down during quieter times to optimize resource consumption.

Security features include role-based access controls and audit logging for compliance requirements. Organizations in regulated industries can maintain detailed records of model access and usage patterns.

Competing With Cloud API Providers

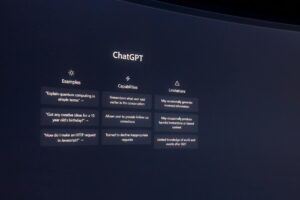

This launch puts Nvidia in direct competition with established cloud-based AI API providers like OpenAI and Anthropic. However, Nvidia’s approach targets a different segment: organizations with existing GPU infrastructure or specific data sovereignty requirements.

The economics favor companies running high-volume inference workloads. While cloud APIs charge per token or request, on-premises deployment costs remain relatively fixed after initial infrastructure investment.

According to Nvidia’s official announcement, NIM supports deployment across on-premises data centers and private cloud environments. This flexibility allows organizations to choose infrastructure that aligns with their operational and compliance needs.

Moreover, the platform reduces latency concerns associated with cloud-based inference. Applications requiring real-time responses benefit from local model deployment without network round-trip delays.

Integration With Existing Development Workflows

NIM integrates with standard container orchestration platforms including Kubernetes and Docker. Development teams can incorporate AI inference into existing deployment pipelines without adopting entirely new toolchains.

The API follows OpenAI-compatible specifications, simplifying migration for applications already using cloud-based models. Developers can switch between cloud and on-premises deployment with minimal code changes.

This compatibility extends to popular AI development tools and frameworks. Teams can continue using familiar libraries and SDKs while gaining the benefits of local deployment.

Additionally, Nvidia provides documentation and sample code to accelerate integration. The company has focused on reducing the learning curve for teams transitioning from cloud-based to self-hosted AI services.

Hardware Requirements and Availability

NIM runs on Nvidia’s data center GPUs, including the H100, A100, and L40S series. The platform automatically detects available hardware and applies appropriate optimizations for each GPU architecture.

Organizations without existing GPU infrastructure can deploy NIM in private cloud environments from major providers. This option provides infrastructure flexibility while maintaining data control compared to public cloud API services.

Pricing follows Nvidia’s enterprise licensing model rather than usage-based billing. Companies pay for software licenses and support rather than per-inference costs, making budgeting more predictable for high-volume workloads.

The platform is currently available to enterprise customers through Nvidia’s AI Enterprise software suite. Additional AI model containers will be added to the catalog in upcoming releases.

What This Means

Nvidia’s NIM platform represents a fundamental shift in AI deployment economics and control. Organizations can now choose between cloud convenience and on-premises control based on their specific requirements rather than being forced into cloud-only solutions.

For companies with substantial inference workloads, the cost advantages of self-hosted deployment become increasingly compelling. The ability to run models on owned infrastructure eliminates ongoing per-request fees that accumulate with cloud APIs.

This launch also signals Nvidia’s evolution from hardware vendor to full-stack AI platform provider. By offering both the chips and the software to run AI models efficiently, Nvidia strengthens its position across the entire AI infrastructure stack.

Finally, the emphasis on enterprise features like monitoring, versioning, and scaling indicates that AI deployment is maturing beyond experimental projects. Organizations now demand production-grade tools that support mission-critical applications with reliability and observability.