Affiliate Disclosure: toolsstackai.com participates in affiliate programs and may earn commissions on purchases made through links in this article, at no cost to you.

TL;DR: Anthropic has released Claude 4 with native multi-modal capabilities that process images, audio, and video through a unified API. The new model delivers state-of-the-art vision performance while introducing competitive pricing and enterprise-grade features including fine-tuning options.

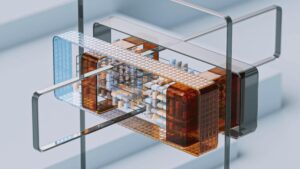

Anthropic has officially launched Claude 4, marking a significant evolution in the company’s AI offerings. The release introduces native multi-modal processing capabilities that handle images, audio, and video content through a single, streamlined API endpoint. This advancement positions Claude 4 as a comprehensive solution for developers building applications that require diverse media understanding.

Claude 4 API Brings Unified Multi-Modal Processing

The new Claude 4 API represents a fundamental shift in how developers interact with AI models. Previously, multi-modal capabilities often required separate API calls or preprocessing steps. Now, developers can send images, audio files, and video content directly to the same endpoint that handles text-based queries.

This unified approach simplifies application architecture considerably. Developers no longer need to manage multiple API integrations or coordinate between different services. Furthermore, the single-endpoint design reduces latency and improves overall system efficiency.

The model achieves state-of-the-art performance on industry-standard vision benchmarks. Anthropic maintains that Claude 4 excels at understanding complex visual scenes, extracting text from images, and analyzing spatial relationships. Additionally, the model preserves the safety standards that have become synonymous with the Claude family of models.

Competitive Pricing Structure for Multi-Modal AI

Anthropic has introduced a tiered pricing model designed to accommodate various use cases. Standard API access costs $2 per million input tokens and $10 per million output tokens. This pricing positions Claude 4 competitively within the current AI model marketplace.

For real-time audio applications, Anthropic offers specialized streaming endpoints. These endpoints cost $0.05 per minute of audio processed. Consequently, developers building voice assistants or audio analysis tools can predict costs more accurately based on usage duration rather than token counts.

The pricing structure reflects the computational demands of different modalities. Video processing, which requires analyzing multiple frames and audio tracks simultaneously, falls under the standard token-based pricing. However, the unified API means developers pay consistent rates regardless of content type.

Comprehensive SDK Support and Developer Tools

Anthropic has released official SDKs for Python, TypeScript, and Java alongside the Claude 4 launch. Each SDK includes built-in retry logic to handle transient network issues gracefully. Moreover, streaming support comes standard across all three implementations, enabling real-time response generation.

The SDKs abstract away much of the complexity involved in multi-modal API interactions. Developers can upload images or audio files using simple method calls. The libraries handle encoding, chunking, and transmission automatically behind the scenes.

Documentation includes extensive code examples covering common use cases. These range from basic image captioning to complex video analysis workflows. Additionally, Anthropic provides interactive notebooks that developers can use to experiment with the API before integrating it into production systems.

Enterprise Features and Fine-Tuning Capabilities

Enterprise customers gain access to advanced features not available in the standard offering. Fine-tuning capabilities allow organizations to adapt Claude 4 to domain-specific tasks and terminology. This customization can significantly improve performance for specialized applications.

Dedicated capacity options ensure consistent performance during peak usage periods. Enterprise clients can reserve computational resources, eliminating concerns about rate limits or throttling. Furthermore, these dedicated instances can be deployed in specific geographic regions to meet data residency requirements.

The fine-tuning process supports multi-modal datasets, enabling organizations to train Claude 4 on their own images, audio, and video content. Anthropic provides tools for dataset preparation and validation. Similarly, the company offers guidance on optimal training strategies to achieve the best results efficiently.

Safety and Moderation Built Into the Core

Claude 4 maintains Anthropic’s commitment to AI safety across all modalities. The model includes built-in content moderation that works consistently whether processing text, images, or audio. This integrated approach helps developers build responsible applications without implementing separate filtering systems.

The safety mechanisms can identify potentially harmful content in uploaded media. They flag inappropriate images, detect concerning audio patterns, and analyze video content for violations. Nevertheless, these protections operate without significantly impacting processing speed or accuracy.

Anthropic has published detailed documentation about Claude 4’s safety features and limitations. The company encourages developers to implement additional safeguards appropriate to their specific use cases. According to Anthropic’s official announcement, the model underwent extensive red-teaming before release.

Integration With Existing AI Workflows

Organizations already using previous Claude versions can migrate to Claude 4 with minimal code changes. The API maintains backward compatibility for text-only requests. Therefore, existing applications continue functioning while developers gradually add multi-modal capabilities.

The model integrates seamlessly with popular AI development frameworks and tools. Support for standard formats like OpenAI’s API specification makes switching straightforward. Additionally, Claude 4 works with established observability platforms, enabling comprehensive monitoring and debugging.

What This Means

Claude 4’s launch signals a maturation of multi-modal AI capabilities in production environments. The unified API approach reduces development complexity while expanding what’s possible with AI-powered applications. Organizations can now build sophisticated systems that understand and generate content across multiple modalities without managing fragmented toolchains.

The competitive pricing makes advanced multi-modal AI accessible to startups and enterprises alike. Real-time audio streaming at predictable per-minute rates opens new possibilities for conversational AI applications. Meanwhile, enterprise features like fine-tuning ensure that large organizations can adapt the technology to their specific needs.

For developers, Claude 4 represents a significant step forward in API design and usability. The combination of comprehensive SDK support, clear documentation, and robust safety features lowers the barrier to building production-ready multi-modal applications. As AI continues evolving beyond text-only interactions, tools like Claude 4 will become increasingly central to modern application development.